Quick Links

Connect with us

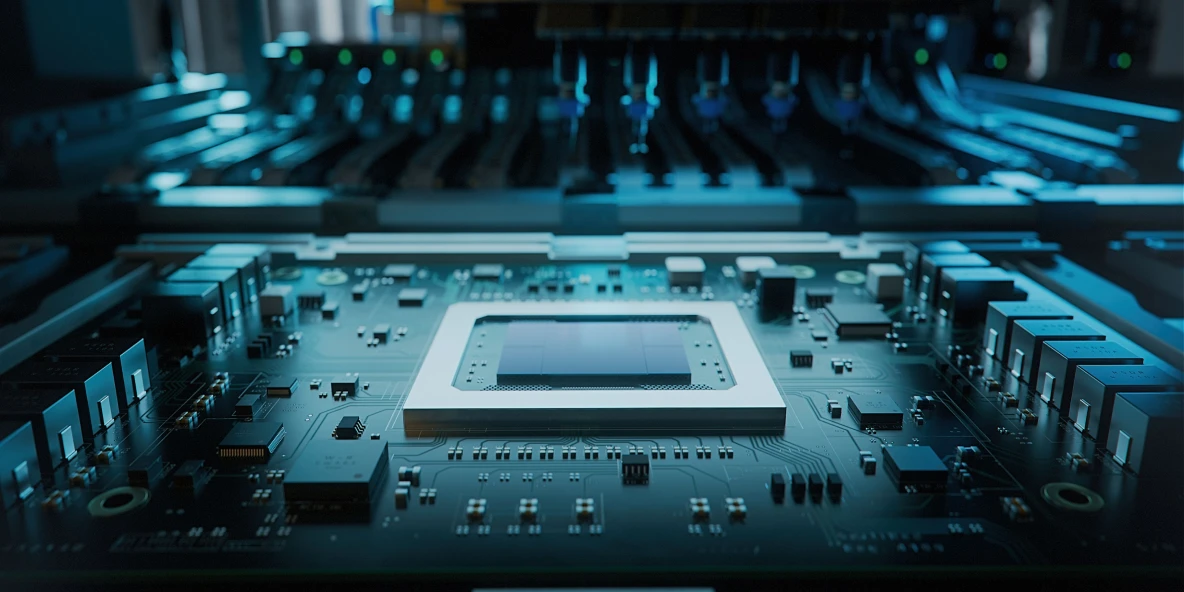

Leverage the power of artificial intelligence and high-performance computing with custom-built NVIDIA H200 SXM GPU Bare Metal Servers, a premium offering on the next-gen AI cloud. Offering up to 34 TFLOPS (FP64), 67 TFLOPS (FP32), 1,979 TFLOPS (FP16), and 3,958 TFLOPS (FP8) with 141 GB of HBM3e memory, these servers are optimised for high-throughput LLM training across 8-GPU NVSwitch configurations.

When training large models or moving petabytes of data, every bit of latency adds up. Bare Metal Servers remove the hypervisor layer entirely, so there's no overhead eating into your performance. You get direct access to the hardware, which means your GPUs are running at full capacity, not sharing resources with other workloads. For compute-heavy applications, that difference shows up fast in your training times and query speeds.